Human-Computer Interaction by Voluntary Vergence Control

SIGGRAPH Asia 2018 Posters

Lingyan Ruan1, Miu-Ling Lam1, Bin Chen1

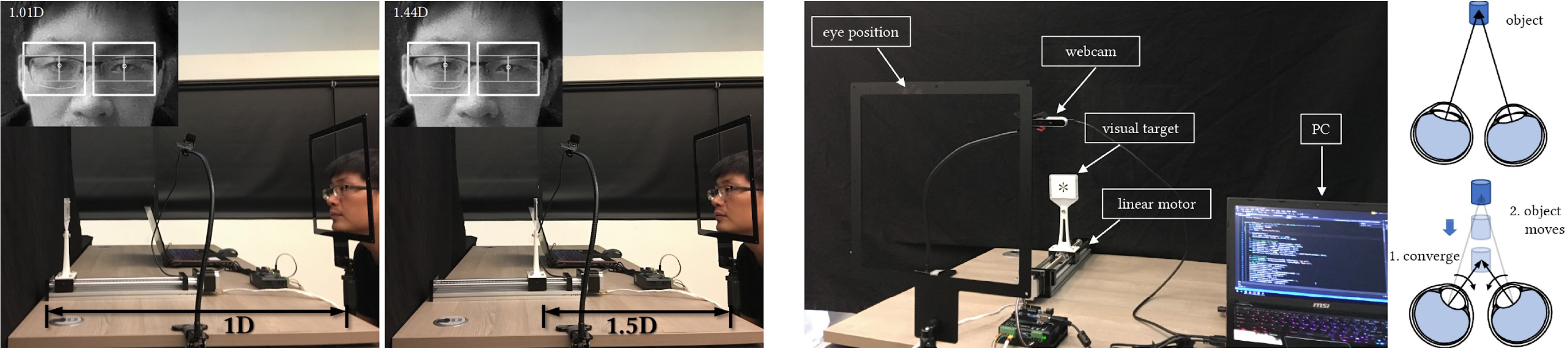

Most people can voluntarily control vergence eye movements. However, the interaction possibility of using vergence as an active input remain largely unexplored. We present a novel human-computer interaction technique which allows a user to control the depth position of an object based on voluntary vergence of the eyes. Our technique is similar to the mechanism for seeing the intended 3D image of an autostereogram, which requires cross-eyed or walleyed viewing. We invite the user to look at a visual target that is mounted on a linear motor, then consciously control the eye convergence to focus at a point in front of or behind the target. A camera is used to measure the eye convergence and control the motion of the linear motor dynamically based on the measured distance. Our technique can enhance existing eye-tracking methods by providing additional information in the depth dimension, and has great potential for hands-free interaction and assistive applications.